Do you use Facebook ads to promote your business?

Do you use Facebook ads to promote your business?

Are you looking to increase your ROI?

Online advertising can be the best way to scale the growth of your business.

The most important step is to figure out your best targeting options, and the way to do that is through testing.

In this article I'll explain what I've learned through my own testing and share five split tests you can use to quickly discover your ideal target audience on Facebook.

Why Use Split Tests?

If you've ever tried to advertise your business on Google AdWords or Facebook, you may not have been satisfied with the results. The truth is, as efficient as they are, both platforms have become quite complex. Being successful with your paid marketing is increasingly requiring more effort.

On AdWords, you need to figure out what keywords work best. On Facebook, you target potential clients based on users' profiles. After hours of trial and error and thousands of dollars invested, I've discovered that nothing is obvious.

That's where split testing (also known as A/B tests) can make all the difference.

Note: This article focuses on new client acquisition efforts aimed at non-fans, not retargeting.

Before You Start Testing

Here are four steps to ensure you use your time most efficiently when split testing.

1. Never sell to cold leads

When you advertise on Google AdWords, you can use sales ads, because users who searched for “social media management tool” are probably looking for one. So you can try to sell your awesome product without warming them up, since they're already hot prospects!

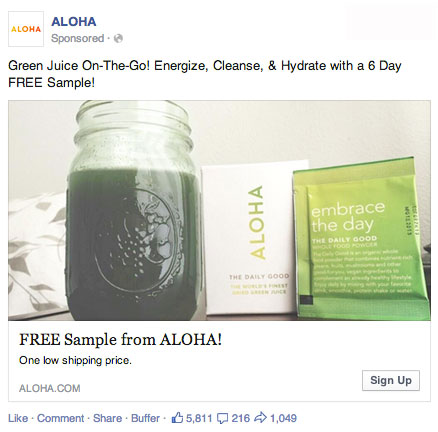

Unlike Google AdWords, Facebook ads do not target intent. They target users' profiles. For example, you may target users who look like your clients based on very similar characteristics, but that does not mean that they are currently looking to buy what you have to sell. Most of them are probably not. Trying to sell your product to most of these people is likely a waste of money.

To artificially create that “intent” targeting with Facebook, you need to first advertise for something that qualifies the intent of an audience, and then retarget those who have clicked on that first ad with a sales ad. You can use various strategies for that first “intent qualification” campaign: free ebook, blog content, free tool, reviews, etc. You'll have to figure out which type of message/content will get you the most qualified leads to retarget.

3 Days of World-Class Training—Zero Travel!

Couldn't make it to Social Media Marketing World and AI Business World this year? Get all of the great content at a fraction of the price with a Virtual ticket.

That’s full access to recordings of every keynote, workshop, and session—the ones people travel thousands of miles to see. Don't wait. Get your Virtual ticket and enjoy actionable content that you can watch anytime, anywhere.

2. Choose a big audience

To optimize a Facebook ad campaign, especially with split testing, you need to have a large audience to target. Split testing won't help if you're targeting an audience of 5,000 people. There won't be enough users to make your split test statistically relevant. As a rule of thumb, a minimum of 100,000 users is required. The bigger the better.

3. Track the entire funnel

When split testing an ad campaign, never rely on the results of the first step of your funnel, or even the second step. Test the entire sales funnel.

If you only measure the click-through rate (CTR) and its associated cost per click (CPC), or even the CTR and the lead conversion rate, you have a one in two chance to rely on highly misleading data. If the goal is revenue, make sure to measure average purchase and lifetime value too.

Too often, marketers get stuck at CTR, CPC or lead conversion to optimize their Facebook ads. They tweak their visuals to get more clicks, test some colored borders and spend a lot of time optimizing things that will have no impact on the bottom line—or worse, have a negative impact.

Sometimes, a lower CTR and higher CPC can lead to higher revenue or lifetime value just because your ad attracted the “right” user. Because there are less “right” users, CTR will be lower and CPC higher, but every step after that will look much better.

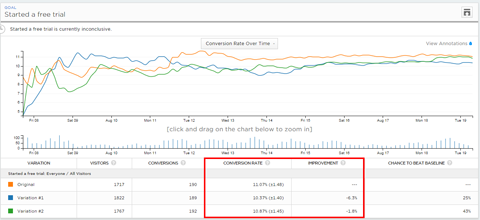

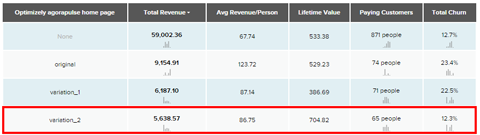

We've conducted a split test on three variations of the same call to action. If you just look at the surface (clicks and lead conversions), neither of the two alternatives beat the original. But when you dig deeper in the funnel and look at the lifetime value and churn rate in terms of revenue, the second variation beats the original by far.

4. Find the right tools

Putting the right tools in place will take a while, but it's worth it. If you don't, you're blind, and if you're blind, you're probably wasting most of your advertising budget. And that is not sustainable.

Facebook Power Editor. First of all, you need to use Facebook Power Editor, not the regular ad manager. The main reason is that only Power Editor will allow you to track more than one conversion pixel. And to test ads, you need to be able to track both leads (free trials, add to cart, email subscribers, etc.) and new clients (subscription started, product purchased, etc.). If you only track one, you'll miss at least half of the picture, and it may be the most important half.

Tracking tools. Next, put all of the tracking tools in place. Install the Facebook conversion pixels on your website, and set up Google Analytics with both goal tracking (to track the number of conversions) and ecommerce integration (to track the revenue generated). Since Google Analytics is not always enough, especially if you are in a subscription business, set up another analytics tool such as KISSmetrics or Mixpanel.

Reporting tools. To really split test all of the elements of your Facebook ads, you need to go beyond the basic Facebook functionality. For Facebook ads reporting, you need to export your reports into Excel and spend a significant amount of time digging into dozens of raw data sets to find the gems.

I recommend AdEspresso for valuable reporting insights. It's an affordable tool that makes split testing Facebook ads easy and efficient.

AdEspresso recently shared with me the list of items that statistically had the most impact on all split tests conducted on their platform. Here's the list, ranked from the highest-impact item to the lowest:

1. Countries

2. Precise interests

3. Images

4. User OS

5. Age range

6. Genders

7. Broad categories

8. Titles

9. Cities

10. User device

11. Body copy

12. Sexual preference (interested in)

13. Relationship status

14. Regions

15. Education

16. Language

Want to Unlock AI Marketing Breakthroughs?

If you’re like most of us, you are trying to figure out how to use AI in your marketing. Here's the solution: The AI Business Society—from your friends at Social Media Examiner.The AI Business Society is the place to discover how to apply AI in your work. When you join, you'll boost your productivity, unlock your creativity, and make connections with other marketers on a similar journey.

I'M READY TO BECOME AN AI-POWERED MARKETERThis list obviously doesn't apply to every split test, but there's value in the rankings. As a rule of thumb, you can estimate that you'll have more insightful results if you split test a country or an interest rather than an education level or a language.

Now that the tools are in place, you're ready to begin. Here are the five split tests I recommend for raising your ROI.

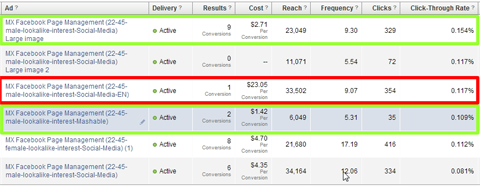

#1: Test Interests

Whether or not you use interest targeting in connection with custom audiences or lookalike audiences, it's a good idea to test their effectiveness. Choose two different (although similar) interests to test.

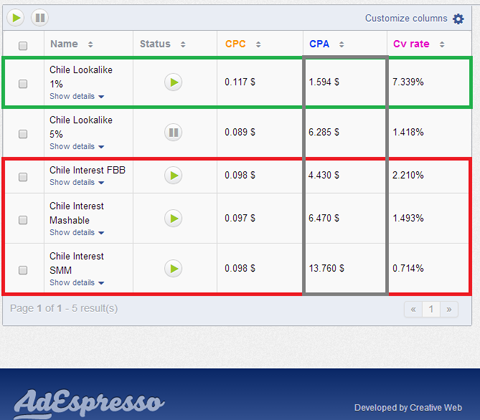

For example, I targeted interests like “Facebook for business” and “social media marketing.” When I started to split test interests against each other, I realized that their effectiveness was far from what I expected.

As these interests are made of the fans of certain pages (like Social Media Examiner) or made up by Facebook (like social media marketing), the quality of the user base they represent can be anywhere from high quality to very poor. You have no idea which ones will be a good fit for your business unless you split test.

Comparing lookalike audiences also gave us a different picture, and they performed better than the interests.

Expert Tip: Interests alone tend not to perform very well for me, and are usually quite expensive to target. The best option is to combine a lookalike audience, based on a custom audience of existing clients, with a relevant interest.

#2: Test Gender

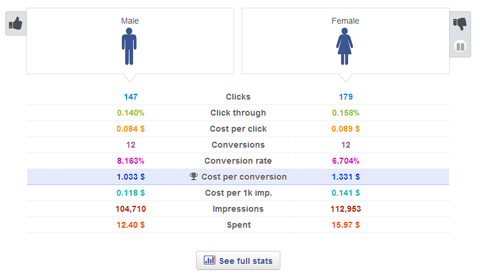

Since we're in 2014, men and women should be equal in the workplace, I was convinced that targeting men or women wouldn't do us any good. I was wrong. Split test gender to see if there's a difference.

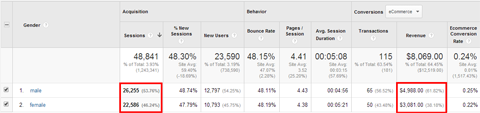

By digging into our Google Analytics accounts, I realized that men were really giving us more revenue overall.

The huge difference in male vs. female conversions was enough of a sign for us to start split testing this criterion in our ads.

It could be that more men have jobs with access to the company's credit card or they're more often making the call when it comes to buying online software. I don't know. However, I also noticed that leads were a bit less expensive for men than women.

Since acquiring male leads is cheaper than female leads, and men will spend more at the end of the day, this informs my ad strategy. Every time I have a sufficiently big audience to target, I prioritize men over women, at least at first.

Note: Remember, this division is only valid if you have a large audience to target. If the audience is too small, splitting it in two will not be good idea.

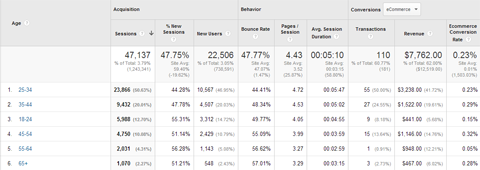

#3: Test Age Range

Age is another factor to test. When I discovered the effect of gender on my advertising ROI, I decided to start testing on age range, which is something I hadn't done in the past.

The results were not surprising. I learned people between 18 and 21 were clicking and testing our software a lot, but paying very little.

The age range had a good conversion into free trials. However, people between 22 and 45 converted the most from free trials to paying clients. I had very few trials and revenue from those older than 45.

Take the numbers from your findings and adjust accordingly. After this test, I started to focus on people between ages 22 and 45 only.

#4: Test Language

Keep in mind your target users may not all come from a country where English is the primary language.

I target prospects all over the globe, and advertise in countries in regions such as Europe and South America. We provide our service in five languages, so I tend to localize marketing efforts, including ad copy and landing pages.

Friends in Mexico and Argentina told me that our target users in those two countries would probably speak English, and that everything in English may have a better image than something in Spanish. So I decided to split test it.

The exact same ad and corresponding landing page were served in both English and Spanish to a Mexican audience. We learned it was much more expensive to acquire leads from the same audience in English than it was in their native language.

Never take one language test as a rule. I did the same test in Argentina with a different result. Keep in mind there may be cultural differences regarding foreign languages from one country to another.

#5: Test Landing Pages

Never send your advertising traffic to your home page. Create landing pages and optimize them.

Do a little research. Click on the Facebook ads from other players in your field. You'll be amazed at how many send ad traffic to their home page. Even when the ad has a feature- or value-specific message, they still go to the home page: one without an appropriate message. This is a wasted opportunity for the advertiser.

To give you a practical example of the effect of a dedicated landing page, we did a split test targeting users in Dubai. We had the same ad go alternatively to our home page and to a dedicated landing page that simply said, “Managing Facebook pages in Dubai? You need [our tools]”. The result: the conversion cost was $6.50 on the home page and $3.00 on the dedicated landing page!

Based on other tests we've conducted, the gap is even bigger when the ad is specific to a feature or a value proposition that your home page will not clearly address.

Split tests are not just about the ads themselves. They're about the destination. Take every opportunity to customize and optimize your landing pages.

Conclusion

After three months of intense split testing and $15,000 in ad spend, we were able to reduce our lead acquisition cost by 66%. Yes, you read that correctly.

Going after the same targets in the same countries, selling the same product, a free trial user who would have cost us $6.30 months ago is now costing us $2.00. We do acquire slightly fewer users, but the cost reduction is so drastic that we're more than happy with that.

Split testing may require time and effort, but it will save time and money in the long haul as you increase your ROI.

What do you think? Have you conducted split tests on your Facebook ads? Did you notice results you didn't expect? Do you have a little trade secret you'd like to share about your success with Facebook ads? Please share your advice and experiences in the comments.

Curious About How to Use AI?

Our newest show, AI Explored, might be just what you're looking for. It's for marketers, creators, and entrepreneurs who want to understand how to use AI in their business.

It's hosted by Michael Stelzner and explores this exciting new frontier in easy-to-understand terms.

Pull up your favorite podcast app and search for AI Explored. Or click the button below for more information.